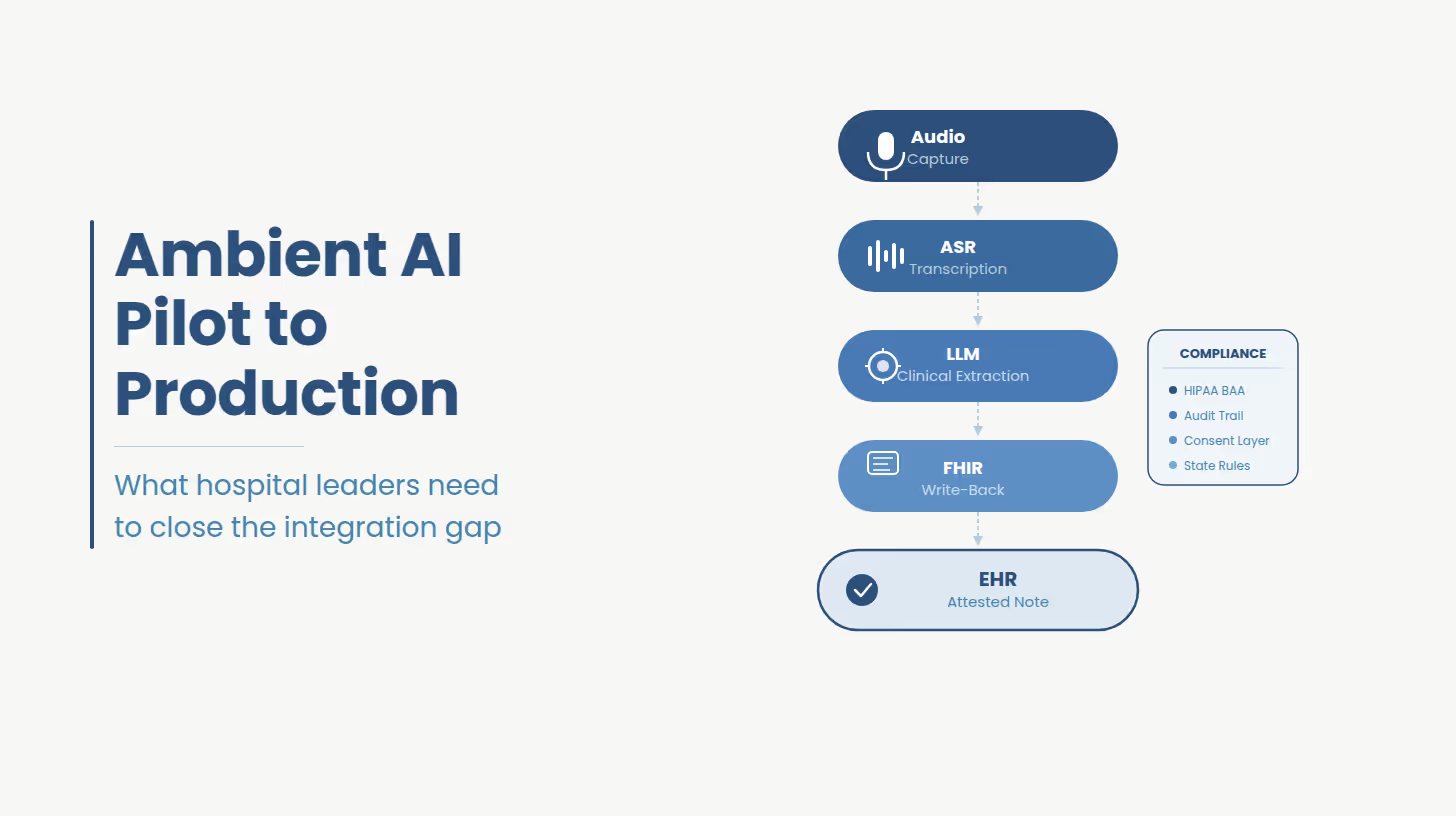

Ambient clinical documentation is a passive voice capture system that converts a clinical encounter into a structured EHR note without physician dictation or manual data entry. The pipeline moves from audio capture through ASR into an LLM that extracts clinical intent, and terminates in an EHR write-back that populates discrete structured fields. The compliance, integration, and governance architecture around that pipeline determines whether the system reaches production.

That definition, while accurate, omits the part that determines whether a deployment succeeds.

The ASR, base LLM capability are all commodity. What is not commodity and what vendor marketing consistently underspecifies is the integration architecture between the AI layer and the production EHR environment: FHIR resource mapping, SMART on FHIR app provisioning, clinician attestation workflow, state-specific consent handling, audit logging infrastructure, and specialty-specific note templates are engineering deliverables. They cannot be configured through a vendor dashboard.

Real EHR integration means the ambient AI system reads patient context from the EHR at session launch and writes structured output back to specific FHIR resources via a bidirectional API. Copy-paste note delivery moves the documentation bottleneck from voice to clipboard. Audit trails are incomplete, data fidelity is lower, and the workflow fails in any environment where EHR session context is required.

For health systems on EHR write-back readiness for ambient AI integration, the integration challenge starts before vendor selection.

The single most consequential distinction in ambient AI deployment is whether the system writes directly to discrete EHR fields through a bidirectional API, or whether it generates a note that a clinician then copies and pastes into the chart.

Real EHR integration means the ambient AI system reads patient context from the EHR at session launch, active problem list, current medications, prior encounter history and writes structured output back to specific FHIR resources: Document Reference for the clinical note, Composition for structured sections, with encounter context tied to the active Encounter resource. For Epic-connected health systems, this requires Epic Showroom approval, SMART on FHIR launch configuration, and FHIR R4 API access scoped to the correct encounter.

Copy-paste integration or browser-based scraping is not EHR integration. It is a workflow that moves the documentation bottleneck from voice to clipboard where physician time savings are partial and data fidelity is lower. Audit trails are incomplete. And the system fails immediately in any environment where clipboard access is restricted or EHR session context is required.

Hospital IT buyers understand this distinction. The r/healthIT community has documented it repeatedly. CIOs evaluating ambient AI deployments should require evidence of FHIR write-back capability before any contract is signed.

For health systems on Oracle Health (Cerner), MEDITECH Expanse, or athenahealth, the integration challenge is compounded. Ambient AI vendor support for non-Epic EHRs ranges from incomplete to absent. Building for a multi-EHR environment requires a custom integration layer.

HIPAA compliance for ambient AI is not a BAA signature and a checkbox. A production deployment requires a BAA covering all data pathways, zero-retention or consent-covered audio storage, encryption at rest and in transit, role-based access controls, complete audit logging, and state-specific consent workflows. Multi-state health systems face additional requirements from state consent laws and active litigation that generic vendor terms of service do not address.

For a complete breakdown of HIPAA-compliant architecture for ambient AI systems, the engineering requirements extend well beyond what vendor BAAs cover.

The minimum viable compliance architecture for a production ambient AI deployment includes: a Business Associate Agreement that covers audio capture, storage, transmission, and processing by the ambient AI vendor; zero audio retention after note generation, or explicit patient consent for retention; encryption at rest and in transit for all audio and derived clinical data; role-based access controls on note review and attestation workflows; and a complete audit log of every interaction between the ambient AI system and the EHR.

Beyond the federal baseline, multi-state health systems face a fragmented consent landscape. Eleven states require all-party consent for recorded conversations. California AB 3030 mandates specific AI disclosure language for clinical communications. The November 2025 Saucedo v. Sharp HealthCare lawsuit introduced active litigation risk for health systems that deployed ambient AI without explicit patient consent workflows. Colorado's AI Act and Texas TRAIGA add governance requirements that generic ambient AI vendor terms of service do not address.

For psychiatric and substance use treatment settings, 42 CFR Part 2 creates a separate compliance layer. Ambient AI vendors built for primary care are not architected for Part 2 restrictions on data sharing and secondary use. Deploying a general-purpose ambient scribe in behavioral health or addiction treatment without a custom compliance layer is a liability decision.

This is a custom software engineering problem.

Need help solving for your software engineering problem?

Getting a product built fast and built right is harder when AI is part of the spec and not part of the process. See how Ideas2IT approaches it.

What you get:

Most ambient AI pilots stall before production for four structural reasons: a FHIR readiness gap in the existing EHR environment, incomplete BAA architecture that leaves data pathways unaddressed, copy-paste note delivery that does not hold in production volume, and absent governance infrastructure. These are engineering failures. The AI worked in the pilot. The integration layer was never built to production standards.

For context on pilot-to-production failure patterns in healthcare AI, the same structural dynamics appear across ambient AI, clinical decision support, and population health tooling.

The four consistent failure modes are:

FHIR readiness gap: Most telehealth and EHR platforms built between 2020 and 2022 were not architected for the FHIR R4 write-back that production ambient AI requires. The pilot runs in a sandboxed environment or against test data. Production deployment exposes the gap.

BAA architecture absent: The ambient AI vendor has a BAA. The health system's legal team signed it. But the BAA does not cover the data pathways between the ambient AI system, the health system's cloud environment, the EHR vendor's API layer, and any downstream analytics or billing systems. The compliance scope was never mapped end to end.

Copy-paste masquerading as integration: The pilot demonstrated note generation. Production deployment revealed that note delivery relied on clipboard transfer into the EHR, a workflow that does not hold in high-volume clinical environments and generates no structured EHR data.

Governance absent: No audit trail, nor escalation workflow for notes flagged for clinical review. No mechanism for tracking hallucination frequency by specialty or encounter type. The system operates without the governance layer that hospital accreditation and risk management require.

According to Qventus, 74% of health system leaders cite EHR vendor reliance as a barrier to their AI strategy. The implication is not that EHR vendors should be replaced. It is that the integration engineering between ambient AI and the EHR environment is a distinct workstream that no EHR vendor has fully delivered and no ambient AI vendor has fully solved.

Health systems should buy the commodity AI layer, base ASR and foundational LLM note generation and build or commission the integration layer. Not many vendor has built the FHIR write-back pipeline, Epic Showroom configuration, state-specific consent workflows, specialty-specific note templates, or audit infrastructure for your specific EHR environment. That layer requires custom engineering regardless of which ambient AI vendor is selected.

For a sharper framing on why health systems cannot wait on EHR vendor roadmaps, the Qventus data makes the cost of that posture concrete.

If your ambient AI deployment is stalled between pilot and production, the gap is almost certainly in one of the components in the custom-build column above. Ideas2IT offers a 30-minute readiness assessment that identifies exactly where your integration or compliance architecture falls short. A technical conversation about what your environment actually requires.Book Your Readiness Assessment

The decision framework resolves to: buy the AI, build the integration layer. Or commission a firm that has built production-grade EHR integration infrastructure before, in a HIPAA-regulated environment, against the specific EHR your organization runs.

The Qventus data makes the cost of the wrong decision concrete: 4% of health systems have achieved scaled AI implementation with measurable outcomes, despite 42% actively deploying. The gap between active deployment and measurable outcome is the custom engineering layer.

For health systems evaluating Epic AI Charting (the Art module) against third-party ambient scribes: Epic Art offers native EHR integration by definition, but specialty coverage, note customization, and governance depth are constrained by Epic's product roadmap. The Qventus data is unambiguous, health system leaders have concluded that waiting for an EHR vendor's native AI roadmap carries its own cost.

Generic ambient AI products are built for the modal clinical encounter, a primary care visit, a single physician, a quiet room, a note structure that maps to SOAP or DAP format. Emergency medicine, psychiatry and behavioral health, and geriatrics each break that model in ways that require custom engineering.

Emergency medicine exposes two unmet technical requirements that most ambient AI vendors have not solved: sub-20-second note generation and speaker diarization in high-noise, multi-speaker environments.

Speaker diarization: separating the physician's clinical statements from patient responses, nurse communications, and hallway conversation is an open engineering problem for ambient AI in ED context. Most vendors have not built diarization that holds in a genuine emergency department environment. A note that transcribes an adjacent hallway conversation into a patient chart is a liability event. And in a regulatory environment where documentation errors directly affect claim outcomes, that liability is not theoretical.

ED workflows also require deep Epic integration because standalone ambient applications are not viable in that environment. The pace of encounter turnover, the complexity of multi-provider coordination, and the volume of discrete structured data that ED notes must capture all require bidirectional EHR connectivity from the first session. A browser-based ambient scribe bolted onto an ED workflow does not hold at production volume.

Sub-20-second note generation is the other threshold. ED physicians do not have the review window that primary care physicians have. A note generation lag that is tolerable in a 30-minute follow-up visit is operationally unworkable in an environment where the next patient is already roomed.

Psychiatry and behavioral health require a compliance architecture that most ambient AI vendors were not built to support.

42 CFR Part 2 governs the confidentiality of substance use disorder treatment records and places restrictions on secondary data use that do not apply to general medical records. Ambient AI vendors built for primary care are not architected for Part 2 compliance. Deploying a general-purpose ambient scribe in a behavioral health or addiction treatment setting without a custom compliance layer is also a liability decision. The vendor's standard BAA does not address it.

Patient consent anxiety around ambient recording is higher in psychiatric settings than in any other clinical specialty. Recorded content in these encounters routinely includes suicidal ideation, trauma disclosure, and substance use history. Audio retention policies that are operationally acceptable in primary care brief retention for quality review, for example are not acceptable when the retained audio contains that content. The legal exposure is different. The patient relationship stakes are different.

Consent workflows for psychiatric settings require custom application logic. Patients who decline recording must have a documented, workflow-integrated alternative. The consent process itself must be part of the audit trail. Generic ambient AI vendors do not provide this.

Geriatric encounters present a note complexity challenge that general-purpose ambient AI models do not handle reliably without significant custom engineering.

High-volume encounters with 18 to 23 active conditions require note structures that cannot be generated by applying a standard SOAP or DAP template to a transcript. The clinical reasoning embedded in a geriatric note connecting multiple chronic conditions, medication interactions, functional status, and care coordination requires specialty-specific prompt architecture or fine-tuning. This is not a configuration toggle in a vendor dashboard.

The failure mode is subtle where the AI produces a note, and the note looks complete. But for a complex geriatric encounter, the note omits the clinical reasoning that connects conditions, underspecifies the assessment of high-priority problems, and does not reflect the actual decision-making that occurred in the room. The physician who attests the note owns the content. If the note is used in a prior authorization or a CMS quality reporting submission, the documentation gap has direct financial consequences.

General-purpose LLMs do not solve this without specialty-specific training data, prompt engineering, or fine-tuning on geriatric encounter transcripts. This is a custom engineering requirement.

Ambient AI hallucinations in clinical documentation are not edge cases. They include fabricated patient statements, misattributed clinical intent, and transcription of ambient sound such as hallway conversation into the active patient chart. The physician who attests the note owns the content legally, regardless of how it was generated.

Engineering-level mitigation requires three components that off-the-shelf vendors do not consistently provide: atomic-fact extraction that maps every discrete clinical claim back to a specific timestamp in the source transcript; a linked evidence layer that shows the clinician exactly which audio segment produced each note element; and a flagging workflow that escalates low-confidence note sections for mandatory review before attestation. Without these, hallucination risk is managed by physician vigilance alone.

Health systems have already spent $200,000 to $500,000 on ambient AI pilots that did not reach production, and the failure point was the integration layer.

What Ideas2IT builds is the engineering layer that makes ambient AI deployable in a production health system environment: the HIPAA-compliant cloud architecture, the FHIR write-back pipeline, the state-specific consent workflow layer, and the audit infrastructure that connects the ambient AI system to the production EHR environment. They are custom-built components scoped to the specific EHR configuration, state footprint, and specialty mix of each health system.

In a recent engagement, Ideas2IT built a HIPAA-compliant, cloud-based EHR platform on Azure for a senior care network a production system that resulted in 25% fewer denied claims and a 25% reduction in CMS reporting time. The same engineering discipline that produced that result applies directly to ambient AI integration. The compliance architecture, the structured data pipelines, the clinical workflow integration built to production standards from day one: these are the components that move an ambient AI pilot into a production deployment.

If your ambient AI deployment is in pilot and has not reached production, the reason is almost certainly in the integration or compliance layer.

72% of health system CIOs say they want a single comprehensive AI partner. 11% have found one, according to Qventus. The delta between those two numbers is the market Ideas2IT serves.

If you are evaluating ambient AI and have not yet mapped your FHIR readiness, your BAA architecture across all data pathways, or your state-specific consent requirements, the readiness assessment should come before the vendor selection.

Ideas2IT offers a 30-minute ambient AI readiness assessment covering EHR integration readiness, compliance architecture scope, and the specific engineering requirements to move from your current state to production deployment. A technical conversation about what your environment actually requires.

Book your readiness assessment

Didn't find what you were looking for?